RealityBridge

Creating XR content requires manual 3D modeling, importing, and scene placement — a slow pipeline disconnected from the physical objects designers actually want to reference.

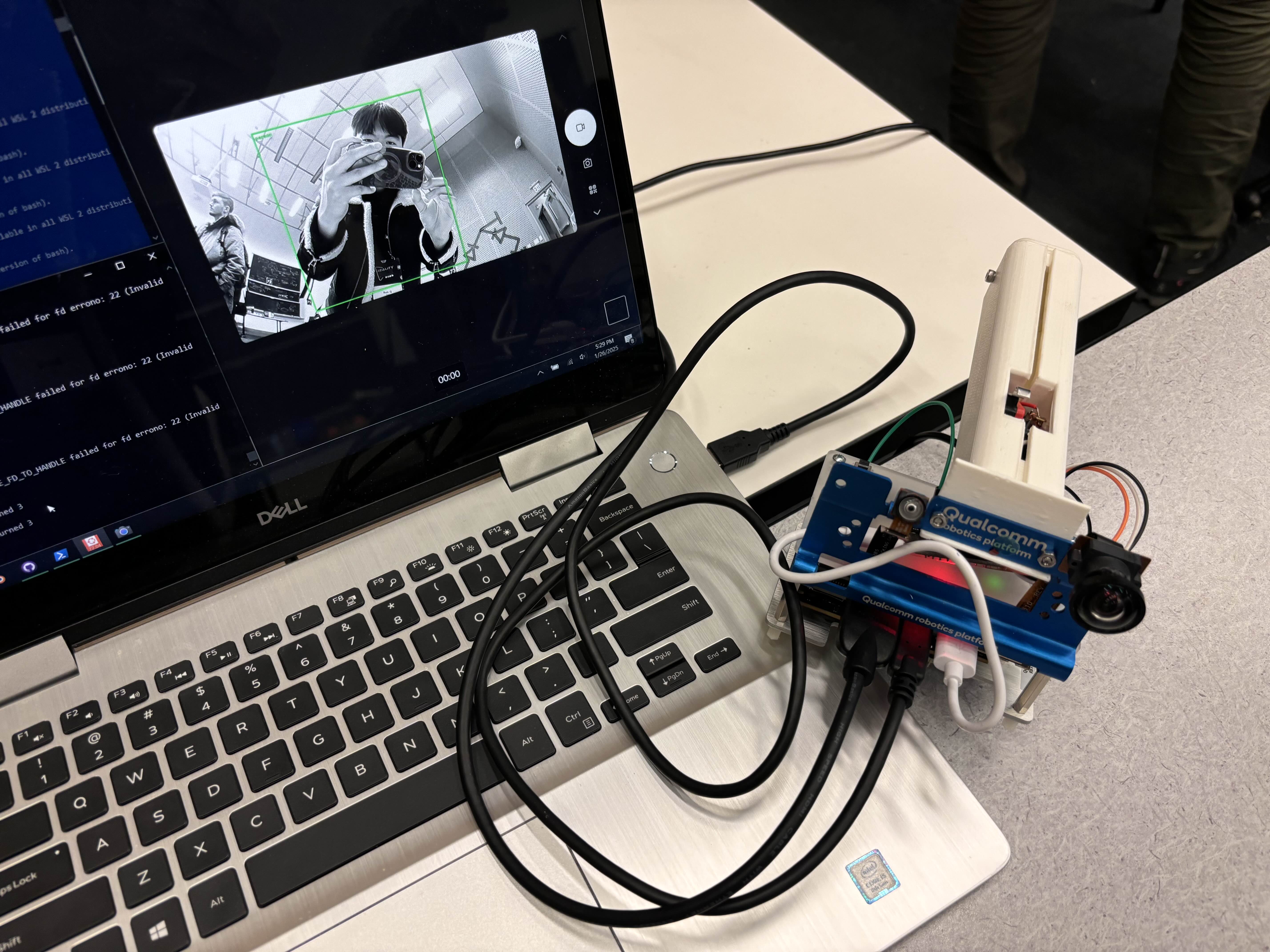

Built a physical copy-paste interface: point a camera at a real object, press Copy, and AI recognition instantly spawns a digital 3D representation in your XR environment.

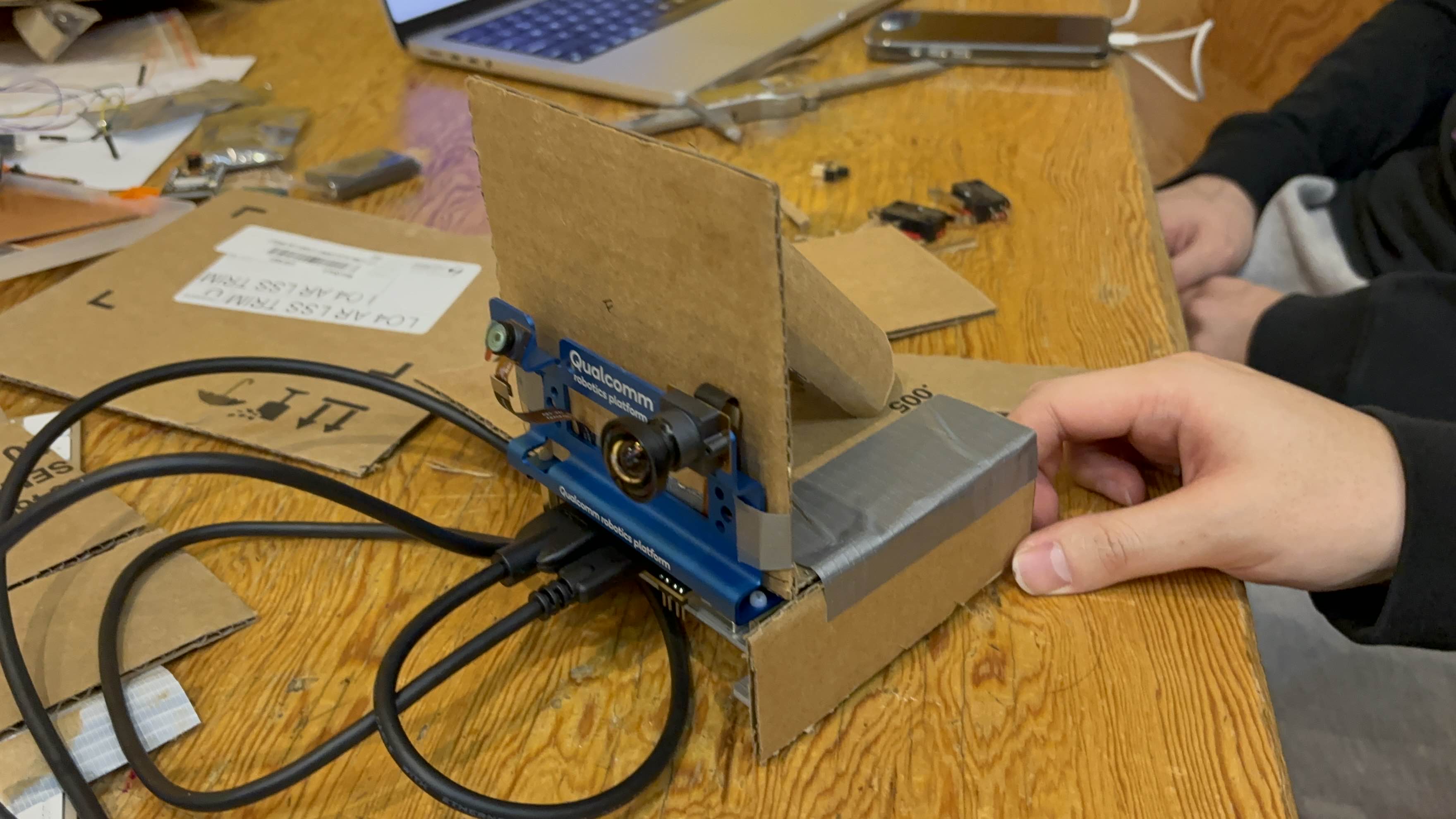

Working research prototype demonstrating real-time physical-to-digital object transfer — combining edge AI vision, custom hardware controller, and Unity XR environment into a seamless interaction.

Overview

RealityBridge asks a simple question: what if reality itself was your XR authoring tool? Instead of modeling 3D assets from scratch, you point a camera at a real cup, book, or apple, press a button, and it appears in your virtual world. The system combines edge AI object detection on a Qualcomm RB3, physical copy/paste triggers via ESP32, Redis-based messaging, and Unity XR rendering into a single tangible interaction flow.

Gallery